Recent updates 🗞️

📉 UAE exits OPEC, oil markets reprice supply

🛢️ JPMorgan warns OECD oil inventories run dry by end-May

💻 Cerebras targets $26.6B IPO, largest tech listing of 2026

🎥 Watching “Pluribus”

YTD Portfolio Performance: +27.30% YTD

I’ve been thinking a lot lately about electricity bills. Not because I’m particularly frugal — but because I moved to Texas last year, and my electricity bill here is genuinely higher than anything I paid on the East Coast. That surprised me. Texas has cheap, abundant energy, right? That’s the story. So I started poking around the numbers. What I found was interesting on its own — but when I layered it on top of some recent IMF research on AI’s power demands, it clicked into something bigger. A thesis I haven’t seen talked about nearly enough in the investing world. Here’s the short version: everyone is buying the AI stack from the top down — software plays, GPU manufacturers, cloud platforms, “picks and shovels.” But the actual binding constraint on how fast AI grows isn’t compute. It isn’t talent. It isn’t even regulation. It’s kilowatt-hours. And right now, the investment implications of that are hiding in plain sight.

The Numbers Are Staggering (And Most Investors Are Ignoring Them)

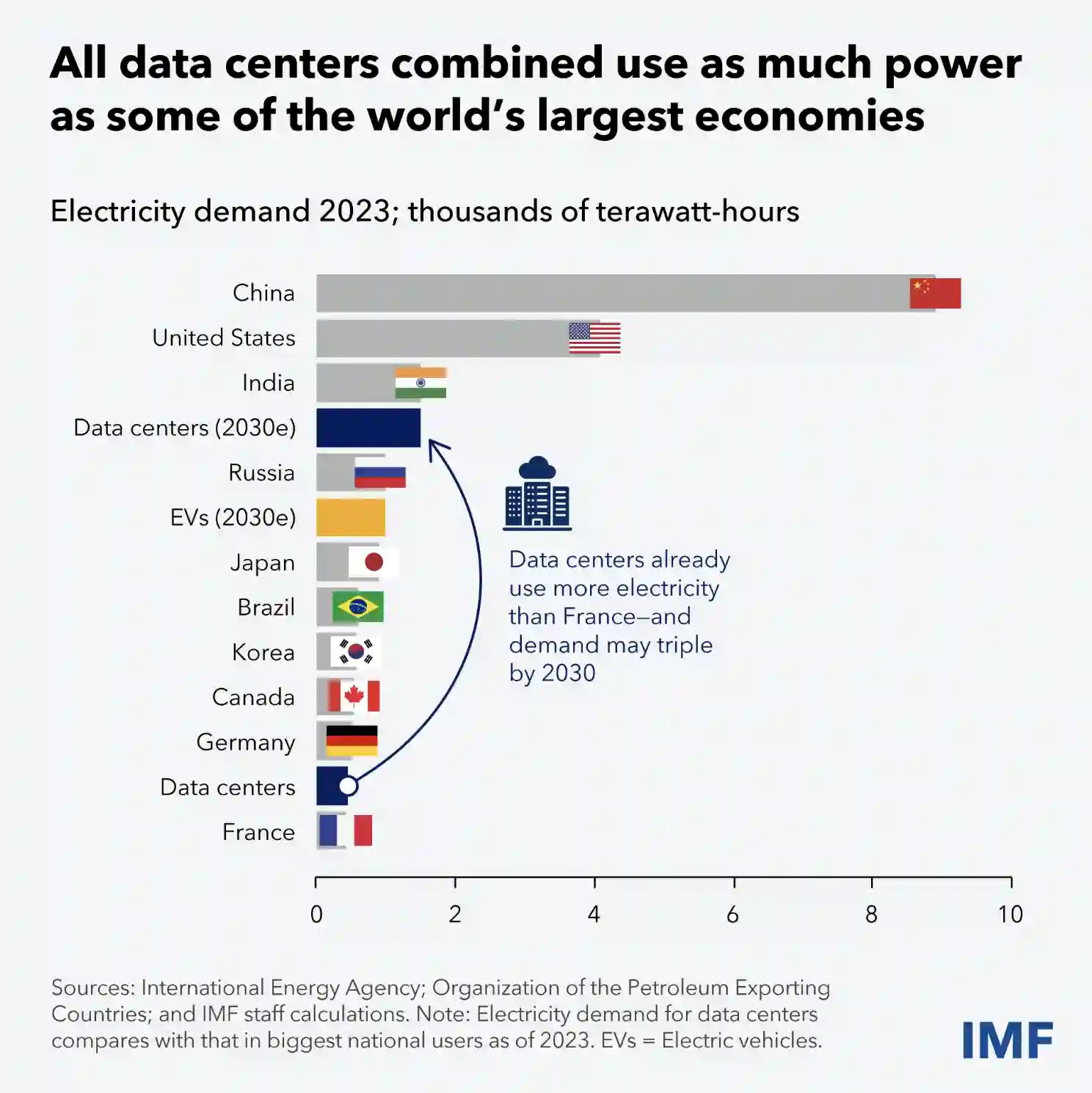

The IMF’s latest research put it plainly: AI needs more abundant power supplies to keep driving economic growth. That’s not a throwaway line — it’s a structural warning from one of the world’s most data-intensive economic institutions. Let me make this concrete. Training a single large language model — one training run — can consume as much electricity as thousands of U.S. homes use in an entire year. And that’s before we get to inference: the compute that runs every time you type a prompt, generate an image, or ask an AI agent to handle a task. Inference is happening billions of times a day, and that number is compounding fast. Data centers globally are on track to roughly double their energy consumption by the end of this decade. The grid was not built for this.

AI-driven rise in electricity demand could add 1.7 gigatons in global greenhouse gas emissions between 2025 and 2030

Every chip cycle, every memory access, every interconnect hop — it’s all heat, and heat is energy. But I hadn’t thought through what that means at scale, across millions of GPUs running 24/7, until I sat down with the data. The numbers are genuinely striking. Here’s what’s interesting though: you’d think this would be the talk of every investor call. It’s not. The conversation is still mostly about which LLM has the best benchmark scores and which hyperscaler is winning enterprise contracts.

Texas Is Ground Zero — And I’m Watching It From My Backyard

This is where it gets personal. The Dallas Fed just published a piece that initially caught my eye for purely selfish reasons: why is my electricity bill so high? Their finding: Texans pay more per month on electricity than Californians, even though California’s per-unit electricity price is actually higher. The reason is consumption — we use significantly more power here. Now layer on this: Texas — specifically ERCOT, our independent grid — is where a disproportionate share of new AI data centers are being built. The reasons are obvious: vast land, lighter regulation, historically cheap power, and a business-friendly environment. Drive outside Austin or Dallas and you’ll see the construction. Massive, windowless buildings going up fast. But here’s the tension nobody’s talking about: Texas built its reputation on cheap, abundant power. The AI buildout is about to stress-test that assumption. ERCOT has already seen demand projections revised sharply upward. The grid that nearly buckled during Winter Storm Uri in 2021 is now being asked to absorb the electricity appetite of thousands of GPU clusters running continuously, in addition to normal residential and industrial load growth from a state that’s adding population as fast as anywhere in the country. So if AI needs the grid, and the grid needs to grow — who actually benefits from all of this?

The Contrarian Trade: You’re Looking at the Wrong Layer of the Stack

This is what I keep coming back to. Most retail investors — and frankly, a lot of institutional ones — are buying AI from the top down. NVIDIA for the chips. Microsoft and Google for the platforms. A basket of software companies deploying AI features. The logic is sound: these are real businesses with real AI revenue. But there’s a layer below all of that which gets almost no attention: the infrastructure that makes any of it possible at the physical level. Not servers. Not fiber. Electricity. Here’s what doesn’t get enough attention: the companies that control electrons flowing into data centers have something that chip companies don’t — durable, local monopoly-like pricing power. Charlie Munger called these “toll booth” businesses. You want to cross the bridge? You pay the toll. You want to run your AI cluster? You pay the utility. A few specific angles worth watching: Utilities with heavy data center exposure. Some regulated utilities are quietly signing long-term power purchase agreements with hyperscalers at favorable rates. This is steady, predictable cash flow layered on top of existing rate-base growth. Boring, until you realize the volumes involved. The nuclear renaissance. Microsoft, Google, and Amazon have all signed nuclear power purchase agreements in the last 18 months. Small modular reactors are years away from scale, but the existing nuclear fleet — previously considered stranded — is suddenly valuable again. Three Mile Island is being restarted specifically to power a Microsoft data center. That’s not a small signal. Grid infrastructure — the unsexy stuff. Transformers. Transmission lines. Substations. The average large power transformer takes 1–2 years to manufacture and install. The U.S. is already facing a transformer shortage. If you want to find a chokepoint in the AI buildout, this might be it. There are a handful of companies that make this equipment, and demand is about to massively outpace supply. Natural gas as the bridge fuel nobody wants to admit they need. Renewables are growing fast, but they’re intermittent. AI data centers need reliable, 24/7 baseload power. Until storage technology catches up, natural gas is filling that gap. This is awkward for the ESG narrative, but the data is pretty clear about where the electrons are actually coming from. The contrarian insight: the biggest near-term beneficiary of the AI boom might not be an AI company at all. It might be the entity that owns the wire connecting the data center to the grid.

The Risks Worth Naming

I’d be doing you a disservice if I just left it there. A few things that could complicate this thesis: Grid buildout is slow. Permitting for new transmission infrastructure in the U.S. takes years — sometimes over a decade. The demand is arriving faster than the supply can respond, which creates near-term opportunity but also genuine fragility. A hot summer stress-testing ERCOT while data centers are drawing peak load is a real risk scenario. AI efficiency gains — the Jevons Paradox. Models are getting more efficient. GPT-4 can now run on hardware that would have been laughably underpowered two years ago. Doesn’t this reduce the power problem? Historically, no — efficiency gains tend to increase total consumption because they make a technology more accessible and widespread. More people using cheaper AI = more total compute = more total power. This is the Jevons Paradox, and energy economists have seen it play out with every major technology wave. The Middle East variable. The ongoing conflict is creating oil and gas price uncertainty that ripples through electricity input costs, particularly for natural gas generation. This is a risk to both the demand side (economic slowdown) and supply side (fuel costs) of the energy equation. Regulatory wildcards. Utility rate-setting is a regulatory process. If data centers start drawing massive amounts of power, regulators may eventually require them to bear more of the infrastructure cost directly — compressing the margin for whoever built the generation capacity expecting stable long-term contracts. I’m still working through what exactly this means for my own portfolio — but the research is too compelling to sit on without thinking it through carefully.

What I’m Doing With This

I’ll be transparent about where I am in my thinking. I’ve started looking more seriously at utility ETFs with significant data center exposure, and at a handful of individual names in grid infrastructure. I’m not ready to name names yet — I want to do more work on the regulatory exposure and balance sheet quality. But the thesis feels right to me, and I’d rather be early and cautious than late and certain. What I’m not doing is dumping my existing AI-exposed positions. I still think the software and compute layer will generate enormous value over time. But I’m increasingly thinking of energy infrastructure as the floor of the AI trade — the thing that has to be true for any of the rest of it to work. If the grid can’t keep up, nothing else matters.

Further Reading

- IMF Blog: AI Needs More Abundant Power Supplies to Keep Driving Economic Growth

- Dallas Fed Economics: Californians spend less on electricity than Texans despite higher prices (April 14, 2026)

- IMF: New Skills and AI Are Reshaping the Future of Work

- Dallas Fed: Implications of the Iran war for U.S. inflation (April 17, 2026)

Cheers 🥂